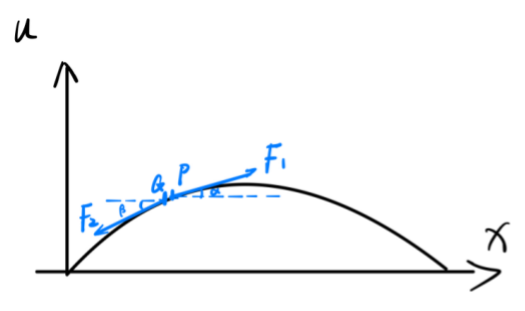

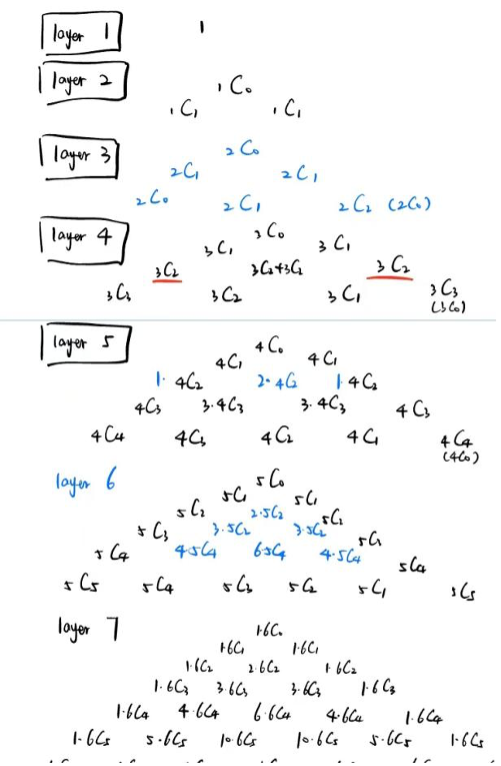

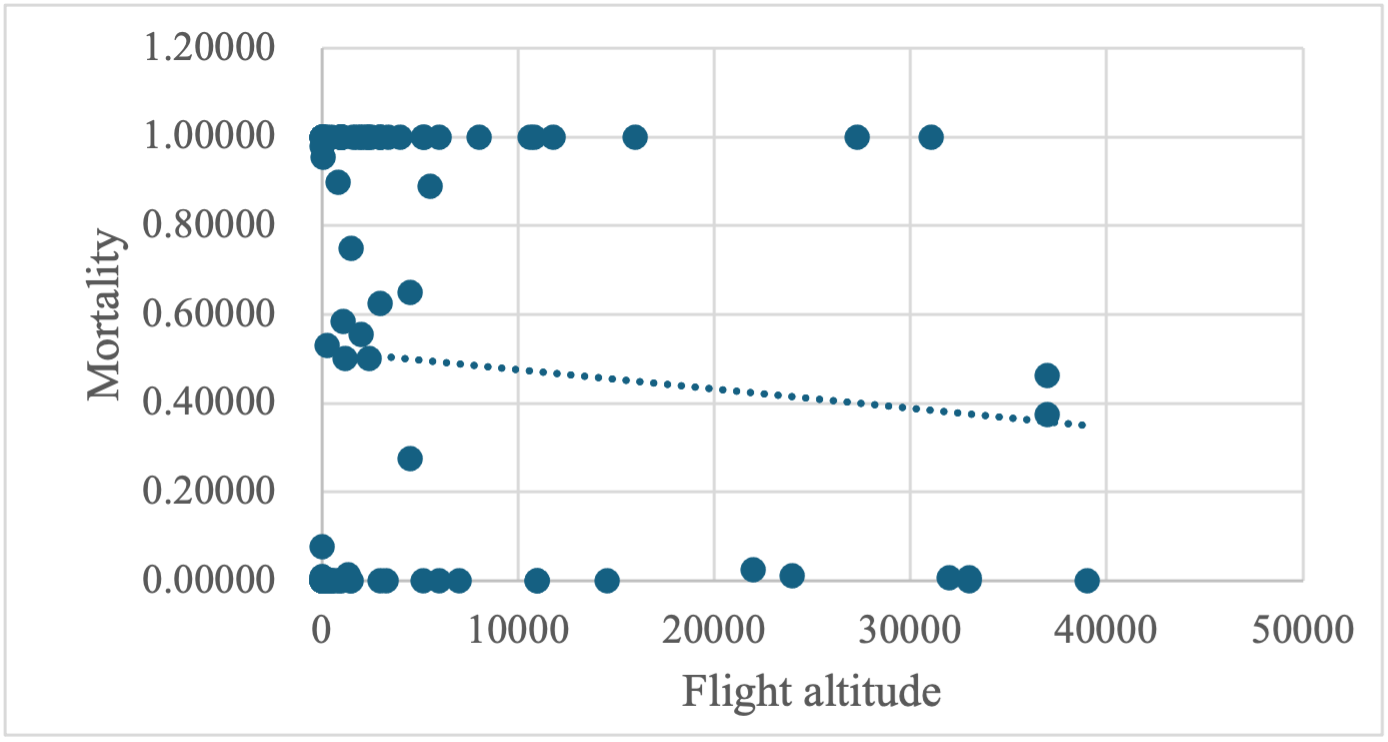

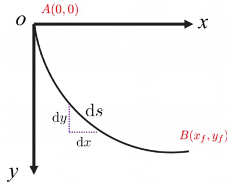

As the discipline of machine learning becomes more and more popular and algorithms for Multi-Armed Bandit (MAB) problems are used more and more frequently, how to choose the appropriate algorithms in contexts with different characteristics is an important topic. Therefore, this study compares the three algorithms by introducing the core connotation and advantages and disadvantages of the explore-then-commit algorithm, upper confidence bound algorithm, and thompson sampling algorithm, and gives the three algorithms' existing optimisation algorithms at the moment, which provides a reference to the selection of suitable algorithms. The Explore-Then-Commit (ETC) algorithm is simple, whereas the Upper Confidence Bound (UCB) algorithm optimises the interface between the exploring and exploitation phases of the ETC algorithm and thus performs relatively consistently, the Thompson sampling algorithm outperforms the first two algorithms in many cases as it naturally balances exploration and exploitation. ETC is suitable for static environments, and UCB is suitable for scenarios where there is continual exploration. However, as more data is available, regret must be reduced over time. decreases and scenarios where there is a need to reduce regret over time, and the Thompson sampling algorithm is suitable for highly uncertain environments.

Research Article

Open Access